You’re usually not starting from zero. You already have too much.

A search tab is open. Another tab has a blog post that sounds confident but cites nothing. A third has a PDF with useful charts but no clear author. Social posts keep repeating the same claim. An AI tool gives you a neat answer in seconds, but you can’t tell which parts come from solid reporting and which parts come from recycled web sludge.

That’s the core problem behind how to find credible sources for research. Many people don’t lack information. They lack a reliable filter.

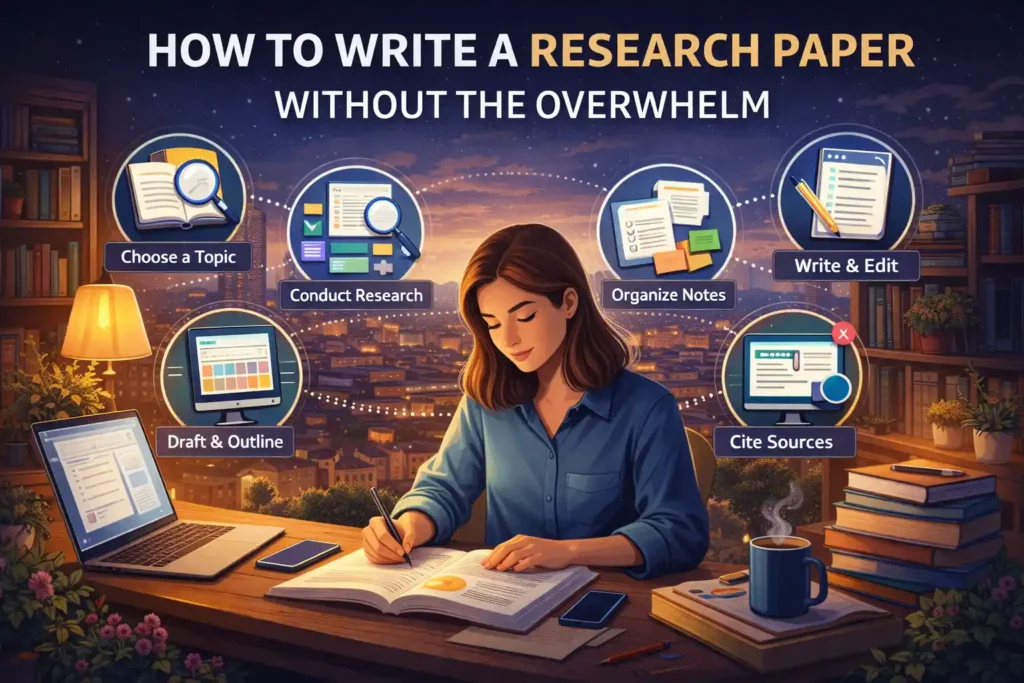

I’ve found that good research is less about hunting for a single “perfect” source and more about building a repeatable vetting habit. The workflow matters whether you’re writing a university paper, a reported essay, a blog post about pet nutrition, a piece on gaming trends, or a roundup on fashion business shifts. Academic topics and everyday topics use different source ecosystems, but the credibility questions are the same.

The practical standard is simple. You want sources that can withstand scrutiny after publication, not just before you click them.

The Modern Researcher’s Dilemma

A typical research session now looks messy from the start. You search one question and get a mix of search results, newsletter summaries, repackaged statistics, affiliate content, and old articles dressed up with a fresh timestamp. Some pages look polished enough to pass a quick glance. That’s exactly why people get trapped.

The confusion gets worse when your topic sits outside a classic academic lane. If you’re researching fashion sourcing, indie music scenes, pet care products, travel behavior, or game development culture, the best material may not live in scholarly journals at all. It may live in trade publications, government datasets, industry reports, expert interviews, conference slides, or NGO publications.

That’s why generic advice often falls short. “Use peer-reviewed sources” is good advice for some questions, but it’s incomplete for many real-world ones. If you’re writing about a current tourism shift or a platform policy change in gaming, waiting for journal publication alone won’t help much.

Practical rule: Start by asking what kind of evidence your topic requires, not what kind of source sounds most impressive.

When people struggle with source credibility, they usually make one of three mistakes:

- They trust presentation over proof. A sleek site and a confident tone aren’t evidence.

- They confuse popularity with authority. High search rank doesn’t mean high reliability.

- They stop at the first citation layer. They quote the article that quoted the report instead of checking the report itself.

The fix is a system. Start in better places. Evaluate every source with a clear framework. Trace important claims back to their origin. Use AI tools as assistants, not as final judges. Keep notes while you work so you can defend every factual statement you keep.

That approach is slower than skimming. It’s also how you avoid publishing something that falls apart the moment a careful reader checks your references.

Beyond Google Where to Find Quality Information

Google is useful. It just shouldn’t be your only doorway.

Strong research usually starts by choosing the right source category for the question. If the question is scientific, you want journals and university material. If it’s about labor, education, population, or public policy, government data is often stronger. If it’s about fashion retail, gaming hardware, tourism flows, or pet industry practice, you may need trade and institutional material alongside academic sources.

Start with institutions that publish primary material

For many topics, the fastest route to credibility is going straight to the institution that collects or publishes the underlying information.

According to Population Education’s guide to reliable online data, websites ending in .edu and .gov are among the most reliable starting points for research, and open-access resources such as DOAJ provide free access to over 20,000 journals, while PLoS has published more than 200,000 peer-reviewed papers since 2003.

That matters because it changes your first move. Instead of searching the full claim in Google, search for the likely publishing body.

Use this logic:

- Employment and workforce questions often belong with the Bureau of Labor Statistics.

- Education questions often belong with the National Center for Education Statistics.

- Population and demographic questions often belong with census data.

- International development and cross-country comparisons often belong with UN data systems.

- University-run research pages are often useful for subject summaries and linked studies.

Use academic databases for depth, not just discovery

For academic and science-heavy topics, databases beat open-web search because they cut down noise.

A practical stack looks like this:

| Need | Better starting point | Why it helps |

|---|---|---|

| Peer-reviewed studies | Google Scholar | Fast discovery and citation trails |

| Open-access journal browsing | DOAJ | Good for free full-text articles |

| Discipline-specific scholarship | University library databases | Better filters and cleaner records |

| Author lookup | ORCID or institutional profile pages | Helps confirm identity and expertise |

Google Scholar is especially useful when you already know the topic language. If your search is vague, Scholar can still flood you with tangential material. Tighten it with exact phrases, date filters, and author names.

Search the open web like a professional

The open web still matters. You just need to search with intent.

A few tactics work well:

- Use site limits. Search within

site:.gov,site:.edu, or a known institution. - Search by file type. PDFs often surface reports, white papers, and official documents.

- Search the claim plus the likely source owner. If a statistic sounds like labor data, add the agency name.

- Search by methodology terms. Add phrases like survey, annual report, dataset, technical notes, or methodology.

Here, many people save time. Instead of reading ten weak summaries, you find one original document and work outward from there.

A credible source often shows its work. Method notes, citations, named authors, and publication details are all signs that someone expects scrutiny.

Don’t ignore grey literature

Some of the best information for non-academic topics comes from what librarians call grey literature. That includes reports from NGOs, industry groups, think tanks, conference bodies, standards organizations, and public institutions.

Grey literature can be excellent. It can also be slanted. The difference usually shows up in transparency.

Look for:

- Named methodology

- Clear publication date

- Identifiable authors or analysts

- Disclosed funding or sponsors

- Direct access to data tables or source notes

For a topic like fashion, an industry report may tell you more about retail behavior than a journal article published years later. For tourism, a destination authority or international agency may offer current movement patterns that scholars haven’t yet analyzed in print. For pets, a veterinary association or animal welfare body may be more useful than a generic lifestyle site.

Use reputable news as a bridge, not a foundation

News outlets can help you discover leads, especially on recent developments. They’re often useful for identifying names, timelines, institutional announcements, and emerging debates. But a news article is usually a starting point, not the final authority for a factual claim.

If a news piece says a report found something important, go get the report.

That habit alone separates solid research from surface-level aggregation.

Evaluating Sources with the CRAAP Test

Finding sources is one skill. Judging them is another.

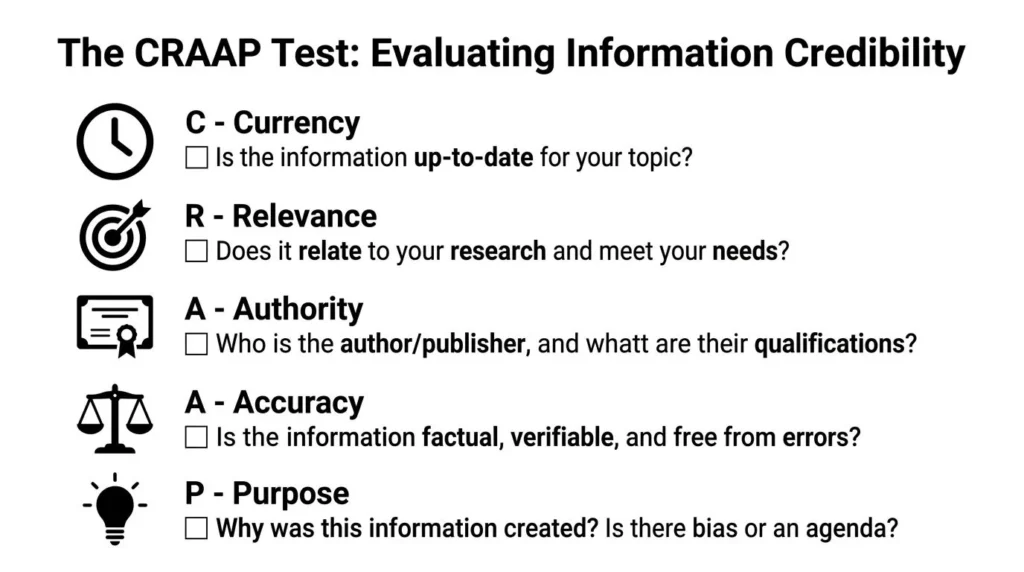

The CRAAP Test remains one of the most practical tools because it turns a fuzzy question, “Can I trust this?” into five checks: Currency, Relevance, Authority, Accuracy, Purpose.

A short visual explainer can help fix the framework in your mind.

The framework is simple. Applying it is harder.

Lehigh University’s research guide notes that when checking Authority, peer-reviewed journals boast 95% higher expert validation rates, and when checking Purpose, commercial sites have a 60% persuasion motive. It also states that success in finding credible information can rise to 92% with CRAAP-trained researchers. Those figures appear in Lehigh’s CRAAP Test guide.

Currency

Currency asks whether the source is current enough for your question.

That doesn’t always mean newest. If you’re studying historical criticism, a decades-old book may be essential. If you’re researching AI tools, travel rules, platform moderation, or consumer tech, old material can mislead you fast.

Check these first:

- Publication date

- Update date

- Whether links and references still work

- Whether the page reflects a changed reality

A common failure point is the article with a recent date stamp but old substance. Many publishers refresh pages cosmetically without revising the evidence.

Relevance

A source can be accurate and still be useless.

Relevance means the material answers your question at the right level. A broad explainer won’t carry a detailed argument. A technical paper may be too narrow for a general overview. A report on U.S. consumers may not support a claim about global markets.

Ask:

- Does this source match my research question?

- Is it too broad, too narrow, or off-angle?

- Is the audience academic, professional, or general public?

- Does it give me evidence, or just commentary?

Often, people keep weak sources here because they’re easy to read. Don’t confuse readability with fit.

Authority

Authority means the source has earned the right to be trusted on this topic.

Sometimes that authority comes from academic credentials. Sometimes it comes from institutional responsibility. A government statistician, a veterinary association, a museum archivist, or a trade analyst can all be authoritative within their lane.

Check authority through multiple signals:

| Signal | What to check |

|---|---|

| Author identity | Real name, bio, field experience |

| Institutional backing | University, agency, newsroom, association |

| Publication history | Has this person or group published serious work before? |

| Citations | Do others credible in the field reference them? |

Authority also applies to the publisher, not just the writer. A solid freelance writer on a weak content farm is still being filtered through a weak editorial system.

For readers trying to sharpen this habit, work on source evaluation as part of broader reasoning. This practical guide on how to improve critical thinking skills is useful because source vetting is really applied critical thinking under deadline.

If you can’t tell who wrote it, who reviewed it, or who stands behind it, treat it as unverified until proven otherwise.

Accuracy

Accuracy is where you stop reading passively and start checking.

Look for evidence that can be examined:

- References and footnotes

- Links to original data

- Methodology notes

- Specific claims that can be tested

- Consistency with other strong sources

Watch for the reverse signs too. Vague attributions are a major red flag. “Experts say.” “Studies show.” “Research proves.” Those phrases don’t help unless the source identifies the study, the experts, and the method.

A source doesn’t become accurate because it cites something. It becomes more trustworthy when those citations are relevant, traceable, and fairly represented.

Purpose

Purpose asks why the source exists.

Experienced researchers save themselves a lot of trouble by considering this. A page can be factually correct in parts and still be designed mainly to persuade, sell, recruit, or frame an issue in a self-serving way.

Purpose becomes obvious when you ask a few blunt questions:

- Who benefits if I believe this?

- Is the page trying to inform me or move me?

- Are counterarguments acknowledged?

- Are limitations stated?

Promotional writing often hides behind informational formatting. Product comparisons, “best of” roundups, sponsored white papers, investor-facing narratives, and advocacy reports can all contain useful information. They just require stricter skepticism.

A quick CRAAP pass before you save any source

Before you add a source to your notes, give it a fast screen:

- Date check

- Author check

- Publisher check

- Evidence check

- Motive check

If it passes those, keep reading. If it fails two or three, move on. Research gets easier when you stop trying to rescue weak sources.

Verifying Claims and Spotting Misinformation

Evaluation tells you whether a source looks credible. Verification tells you whether a specific claim is true.

That difference matters. A respectable-looking article can still repeat a bad statistic, misread a paper, or quote a source out of context. This is why journalists and librarians use lateral reading. Instead of staying on the page and absorbing its argument, they open new tabs and investigate around it.

Use lateral reading, not tunnel reading

When you hit an important claim, stop scrolling and branch outward.

Check:

- Who is the author outside this page

- What other outlets say about the same claim

- Whether a cited report exists

- Whether the report says what the article says it says

- Who funded or commissioned the underlying work

This process is slower than taking a screenshot and moving on. It’s also how you catch the common failures: cherry-picked findings, dead links, misquoted experts, and old data presented as new.

Trace statistics to the primary source

The cleanest version of any statistic is usually the first published version with a methodology note attached.

A practical example: if a blog says “a study found” and links to another blog, that’s not enough. Keep tracing until you reach the journal article, dataset, official release, or institutional report. If you can’t find that origin, treat the number as unstable.

The same rule applies to quotes. If a sentence is important enough to include, find where it was originally said or written.

The most reliable fact is the one you can trace to its point of origin and read in context.

Use P.R.O.V.E.N. when the claim is high-stakes

For more advanced vetting, the P.R.O.V.E.N. framework helps: Position, Purpose, Objectivity, Verifiability, Expertise, Newness.

According to the EAIE guide on determining credibility in research reports, peer-reviewed journals offer 95% reliability compared with 50% for blogs, cross-checking with primary data can produce an 88% accuracy uplift, and Retraction Watch tracks over 20,000 flawed papers. That combination is useful because it pushes you beyond surface trust and into active confirmation.

Here’s where P.R.O.V.E.N. earns its keep:

| Element | What you’re looking for |

|---|---|

| Position | What context produced this source? |

| Purpose | What is it trying to do? |

| Objectivity | What might it be leaving out? |

| Verifiability | Can you check the evidence directly? |

| Expertise | Does the author or publisher know this field? |

| Newness | Is it current enough for the claim? |

Watch for source laundering

A lot of misinformation doesn’t look wild. It looks polished.

A weak claim often gets “laundered” this way:

- Someone posts it on a blog or social platform.

- Another site repeats it.

- A newsletter cites that site.

- A content writer cites the newsletter.

- The claim now looks established because it appears in multiple places.

It isn’t established. It’s just repeated.

If you’re interested in how this spread works in public information ecosystems, this piece on what is citizen journalism gives useful context on how speed, access, and verification can pull against each other.

Keep a retraction and correction mindset

Not every flawed source is malicious. Some are outdated. Some are corrected later. Some papers are retracted. Some articles change after criticism.

That’s why serious researchers don’t just collect links. They collect defensible links. If you can explain why a claim is trustworthy, where it came from, and what its limits are, you’re in good shape.

If you can’t, don’t use it.

Finding Credible Sources for Everyday Topics

Many research guides get thin at this point. They’re built for essays and lab reports, then they go quiet when the topic shifts to fashion, gaming, pets, music, tourism, or entertainment.

That gap is real. A Microsoft article on finding credible sources notes that a 2023 American Library Association study found 68% of non-academic researchers struggle with source validation, and it also cites a Nature Index report from October 2025 saying AI-assisted tools such as Perplexity can support 45% faster source vetting and a 30% reduction in misinformation use, though human judgment still matters.

What credibility looks like outside academia

For non-academic topics, credibility often comes from a mix of source types rather than one prestige label.

For example:

- Fashion often requires trade reporting, brand disclosures, supply-chain reporting, and expert commentary from designers, buyers, or analysts.

- Gaming often requires developer statements, platform documentation, patch notes, technical testing, and reputable specialist reporting.

- Pets often calls for veterinary guidance, animal welfare organizations, product safety information, and practitioner expertise.

- Tourism often depends on official destination bodies, transport authorities, public advisories, and field reporting.

- Entertainment and music often benefits from interviews, credits databases, legal filings, festival programming, and established critics.

The key question changes from “Is this peer-reviewed?” to “Who would reasonably know this, and how would they show it?”

Use trade publications carefully

Trade publications can be excellent because they’re close to the industry. They also have blind spots because they depend on industry access and relationships.

Use them best when they provide:

- Named reporters or analysts

- Direct quotes from accountable people

- Documents, filings, or official statements

- Clear distinctions between news, analysis, and sponsored material

Treat trend pieces with caution if they rely on unnamed insiders, recycled press releases, or broad claims with no documents attached.

AI tools can help, but they can’t absolve you

AI-assisted search and verification tools are useful for triage. They can surface likely sources, help compare summaries, and point you toward original documents faster than manual searching alone.

What they can’t do reliably is make judgment calls for you.

Use AI tools for tasks like:

- Locating candidate sources

- Surfacing possible primary documents

- Comparing wording across outlets

- Generating follow-up questions to investigate

Don’t use them as your final authority on truth. Always click through. Always inspect the source itself. Always verify the claim you plan to publish.

Human judgment matters most when a claim is convenient, emotionally satisfying, or perfectly aligned with what you expected to find.

A fast test for expert blogs and creator sources

Sometimes the best source on a niche topic is an independent expert, not a formal institution. That’s common in gaming hardware, beauty, music production, specialty pet care, and travel logistics.

Check four things:

| Question | Strong sign |

|---|---|

| Do they show work? | Screenshots, documents, methods, citations |

| Do they correct mistakes? | Visible updates or correction notes |

| Do they separate fact from opinion? | Clear labeling and careful wording |

| Do they have real domain expertise? | Relevant background and consistent track record |

An expert without transparency is still risky. A transparent expert with evidence can be very strong.

Building Your Research Workflow

Good research falls apart when the workflow is chaotic.

If you save random tabs, copy loose quotes into a document, and plan to “sort it out later,” you’ll eventually lose track of what came from where. That’s how writers end up with unsupported claims and broken citations.

Build a simple system you’ll use

You don’t need a complicated setup. You need a consistent one.

A practical workflow looks like this:

- Collect sources in one place using Zotero, Mendeley, or a structured notes app.

- Tag each source by topic and source type.

- Record why you trust it. Add a note on author, publisher, date, and limits.

- Pull only verified quotes and claims into your draft notes.

- Cite while drafting, not at the end.

Zotero is excellent for web capture and citation management. Mendeley works well for PDF-heavy reading. A plain spreadsheet also works if you stay disciplined.

Keep a credibility note beside each source

One small habit makes a big difference. Next to every saved source, write one sentence explaining why it belongs.

Examples:

- Government dataset with direct methodology

- Reporter cites court filing and includes original document

- Veterinary association guidance updated recently

- Trade report useful for context, but promotional bias possible

That note forces you to evaluate sources while you’re still alert, not after you’ve built half a draft on shaky material.

Treat citation as part of your authority

Citing sources isn’t just about avoiding plagiarism. It shows readers where your claims came from and lets them inspect your work.

Writers who want to publish regularly should treat sourcing as part of their public reputation. If you plan to build an audience or contribute articles online, this guide on how to publish articles online is a useful companion because publication quality depends heavily on source quality.

The most credible researchers aren’t the ones who sound smartest. They’re the ones whose evidence still holds up after readers start checking.

Frequently Asked Questions About Source Credibility

Is Wikipedia a credible source

Wikipedia is a useful starting point, not a final source for most research. Use it to identify terms, debates, and citations worth following. Then go to the cited material and evaluate that directly.

Can I use a source with no named author

Sometimes, yes. Government pages, institutional reports, and newsroom explainers may be published under an organizational name. In that case, evaluate the publisher, publication details, and editorial standards. If there’s no accountable individual or institution, be cautious.

Are social media posts ever valid sources

They can be valid as evidence of what was posted or said, especially for public statements, brand announcements, or cultural analysis. They are usually weak as proof that a factual claim is true. Cite the post for the statement itself, then verify the underlying claim elsewhere.

What if two credible sources disagree

That happens often. Check dates, definitions, methods, geography, and scope. Two sources may appear to conflict while measuring different things. If the disagreement is real, present it forthrightly instead of forcing a false consensus.

How many sources do I need

There isn’t a universal number. Use enough high-quality sources to support your central claims, reflect the complexity of the topic, and cross-check important facts. Fewer strong sources beat a long list of weak ones.

If you enjoy practical guides like this, explore maxijournal.com for approachable writing across science, technology, health, sports, business, arts, tourism, fashion, pets, entertainment, education, and games. It’s also a useful place for writers who want to study how well-structured online articles reach broad audiences without sacrificing credibility.

Discover more from Maxi Journal

Subscribe to get the latest posts sent to your email.